Common DFOS tools:

Documentation

|

| dfos

= Data Flow Operations System, the common tool set for DFO |

autoDaily

![[top] [ top ]](/images/arr1red-up.gif) Description

Description

This tool is the standard wrapper tool providing the automatic, incremental daily

dfos workflow. It provides automatic processing of CALIB data, all the way from detection

of new data down to processing and scoring.

The tool calls ngasMonitor to search for new CALIB data. If nothing is found, it exits. If new CALIB data are found, the tool creates new ABs,

executes them, ingests QC1 parameters, creates QC reports and scores, and finally updates any new HC report

found by scoreQC.

This strategy

ensures that at the end of execution all HC plots are properly updated. Typically the tool is called as cronjob, once per hour. Since it works on new data only, it processes the data in an incremental way, providing an up-to-date feedback on the HC monitor and at the same time a good load balance.

The tool can be started externally from the HC monitor pages, using a php script. This mode is called "forced" mode and is designed for PSO staff to launch an upgrade of the HC monitor when they need urgent feedback and don't want to wait for the cronjob to start.

The tool supports the BADQUAL workflow of calChecker, by adding new ABs for TODAY to the list of offered ABs on the calChecker BADQUAL list (see there).

![[top] [ top ]](/images/arr1red-up.gif) Workflow. This is the workflow of autoDaily:

Workflow. This is the workflow of autoDaily:

| |

check for new fits files

|

download and processing |

updating new HC jobs |

|

autoDaily |

| calling ... |

|

scoreQC

(as part of QC reports) |

execHC_TREND [trendPlotter] |

| ngasMonitor |

createReport |

createAB |

createJobs + processAB + processQC |

|

| finding ... |

new fits files |

new QC1 parameters |

new HC jobs

|

![[top] [ top ]](/images/arr1red-up.gif) Date list list_data_dates. autoDaily reads

a file $DFO_MON_DIR/list_data_dates . This file is filled

by ngasMonitor with processing dates. autoDaily loops over that date

list. In incremental mode, that list usually contains only the current date, unless there is

a delivery backlog (the data transfer is disturbed) and files are delayed. With the configuration

key FLASHBACK=NO you can avoid the date list having dates which are older than two

days.

Date list list_data_dates. autoDaily reads

a file $DFO_MON_DIR/list_data_dates . This file is filled

by ngasMonitor with processing dates. autoDaily loops over that date

list. In incremental mode, that list usually contains only the current date, unless there is

a delivery backlog (the data transfer is disturbed) and files are delayed. With the configuration

key FLASHBACK=NO you can avoid the date list having dates which are older than two

days.

You can also edit the date list to contain a custom set of

dates (e.g. for reprocessing). Then you call the tool as 'autoDaily -D' from the command

line.

![[top] [ top ]](/images/arr1red-up.gif) Execution flow. The tool then executes the following steps:

Execution flow. The tool then executes the following steps:

I. Normal execution mode:

for each DATE in $DFO_MON_DIR/list_data_dates :

- headers: downloaded incrementally (HdrDownloader -t 24)

- check for new data: ngasMonitor

- new data found: for each date with new data:

- create/update data report: createReport

- completeness

check: comparison between available headers and NGAS file list; any mismatch

-> date

is INCOMPLETE, otherwise COMPLETE

(relevant for bulk mode data delivery)

- check for other processes calling createAB (like processPreImg)

as a safety measure; if found, wait 60 sec and try again; do so until OTHER_TIMEOUT is

reached (50 minutes or less, as configured).

- execute $DFO_BIN_DIR/$PGI_PREPROC if configured

- create the CALIB ABs :

- createAB -m CALIB

-a -i (automatic and incremental mode)

- create processing job and QC job (calling createJob

-m CALIB); it is

called JOBS_AUTO and does not interfere with the regular JOBS_FILE as used by createJob

- launch the processing and QC

jobs

- launch execHC_TREND (under $DFO_JOB_DIR) which is dynamic and is

filled with trendPlotter calls (all reports are collected which are affected by any

new QC1 parameter from the QC1 database).

![[top] [ top ]](/images/arr1red-up.gif) II.

Execution if previous session has not yet finished ($OTHER_TIMEOUT): the tool checks for another session still being executed. This could happen if many ABs

have been created and are still executing. Then, the tool goes into a "wait" loop and checks every 60 sec for the

other autoDaily session being finished, until a total configurable TIMEOUT time

has elapsed after which it terminates (notification email is sent).

II.

Execution if previous session has not yet finished ($OTHER_TIMEOUT): the tool checks for another session still being executed. This could happen if many ABs

have been created and are still executing. Then, the tool goes into a "wait" loop and checks every 60 sec for the

other autoDaily session being finished, until a total configurable TIMEOUT time

has elapsed after which it terminates (notification email is sent).

With this strategy, it is guaranteed that autoDaily will always keep the HC reports up-to-date

within 1 hour, no matter if it is executed under

- incremental processing conditions and "normal"

load (all processing done within one hour)

- incremental processing conditions and high load (then one go of incremental processing

is skipped but will be re-tried in one hour).

See also the workflow table.

Since autoDaily is called again after 1 hour,

the timeout parameter should not be longer than 60 minutes (actually a maximum value

of 50 minutes is hard-coded).

![[top] [ top ]](/images/arr1red-up.gif) III.

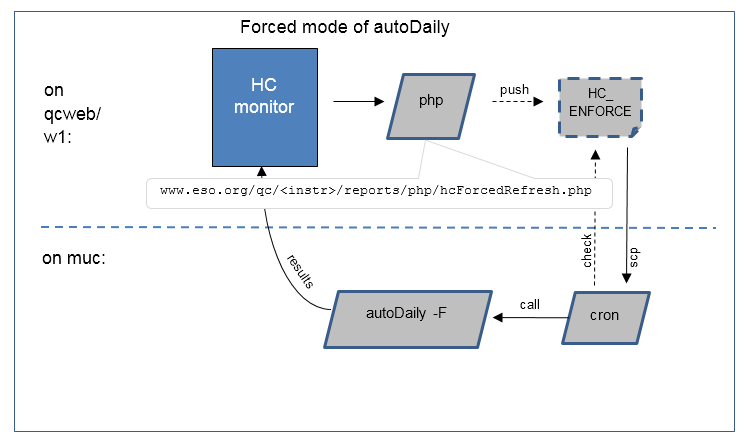

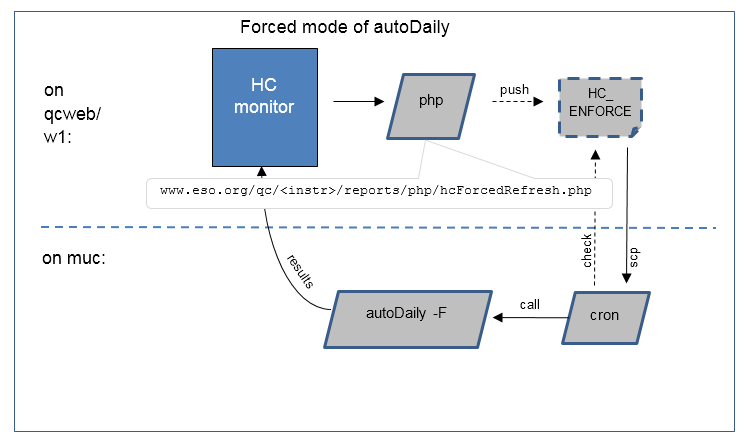

Forced execution: the tool can also be called from the HC monitor, with a php form that starts a password-protected dialog. If confirmed, the user can set a trigger which is recognized by a cronjob on the operational machine and used to start an autoDaily session with the usual functionality decribed above. (For security, there is no direct access from the web server to the operational machine.) The external user can follow the log file and also receives mails with the start and finish information. The intention of this mode is to allow Paranal staff to launch a refresh of the HC monitor on a short notice, e.g. when they have created new calibration data and wait for quick feedback on the HC monitor.

III.

Forced execution: the tool can also be called from the HC monitor, with a php form that starts a password-protected dialog. If confirmed, the user can set a trigger which is recognized by a cronjob on the operational machine and used to start an autoDaily session with the usual functionality decribed above. (For security, there is no direct access from the web server to the operational machine.) The external user can follow the log file and also receives mails with the start and finish information. The intention of this mode is to allow Paranal staff to launch a refresh of the HC monitor on a short notice, e.g. when they have created new calibration data and wait for quick feedback on the HC monitor.

For the forced mode to work properly, the following components are needed:

| what |

provided by |

| 1. php interface on www.eso.org/qc/<instr>/reports/php |

autoDaily (uploaded every time upon execution) |

| 2. cronjob to check for trigger set by php script |

dfosCron -t autoDaily (to be run once per minute) |

| 3. execute the forced mode |

autoDaily -F |

|

| Sketch of the forced mode of autoDaily: The user creates a request for running autoDaily, using the php interface and creating a file HC_ENFORCE. A monitoring cronjob on the muc machine discovers this "trigger file" and then launches 'autoDaily -F'. Processes on the web server and on the muc machine are strictly separated. It is not possible to launch a muc process from the web server. Execution of the processing job on the muc server is controlled by the cronjob. The trigger file must have correct name and a defined content, otherwise it is ignored. |

Workflow:

|

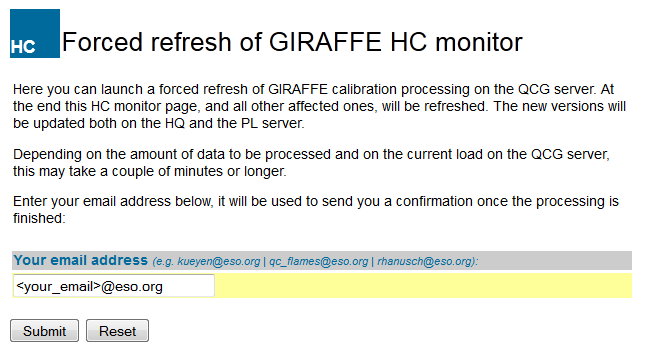

- Click the button 'forced refresh', on any of the HC reports.

- You are prompted for authentication.

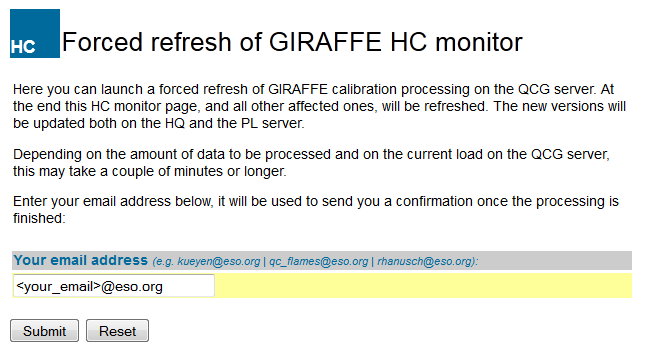

- The dialogue "Forced refresh" asks you to enter your email address (can be operational or personal). It is used to notify you via email about start and end of the processing. The defaults are per instrument: the telescope account, the QC name, and the name of the QC scientist. |

|

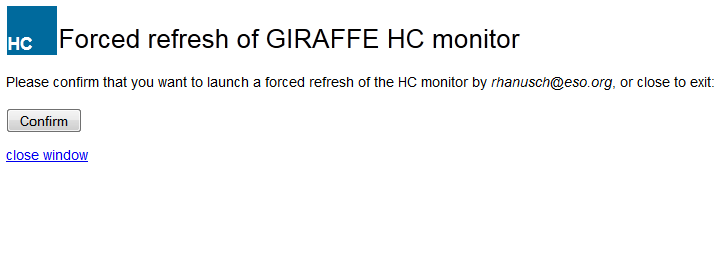

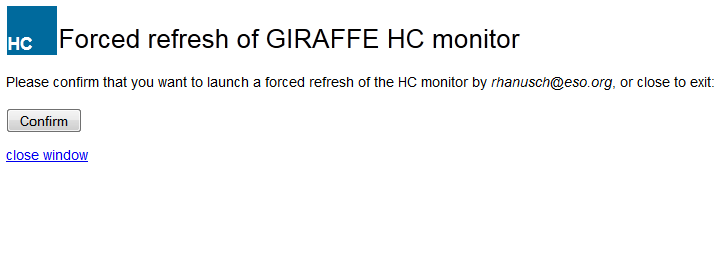

| Then you are asked for confirmation: |

|

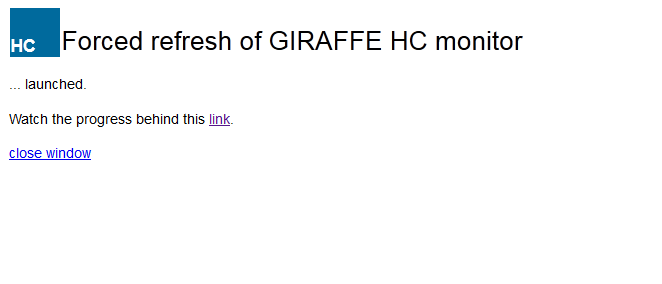

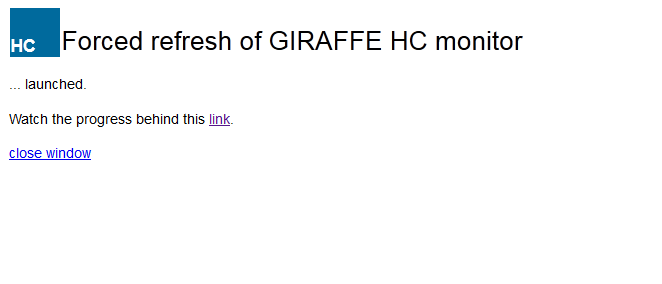

Next, the trigger is set. As soon as the monitoring cronjob picks up the trigger (after one minute at most), the process will be started on the operational machine of QC Garching (if no other similar process is already running).

You can watch the progress of the processing behind the marked link. |

|

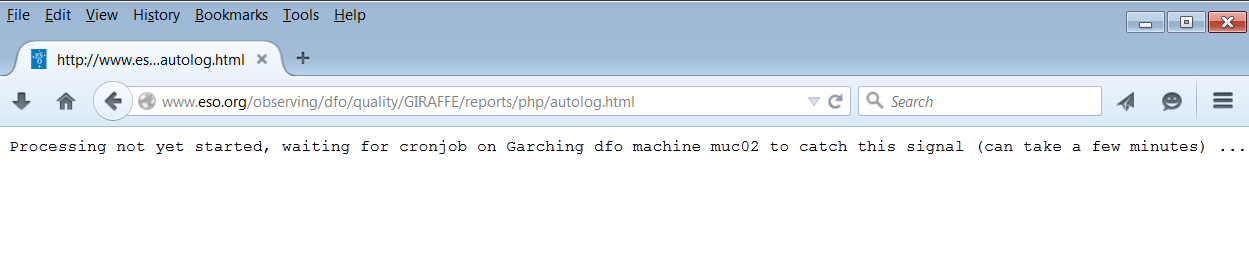

| While waiting, the message is the following: |

|

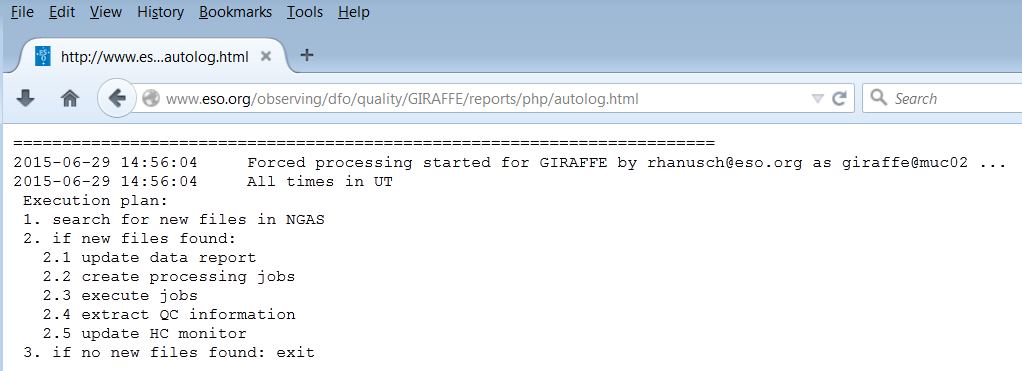

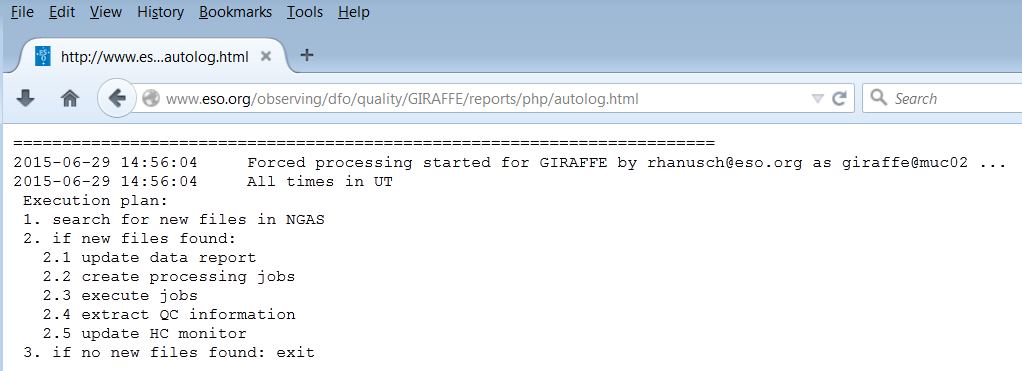

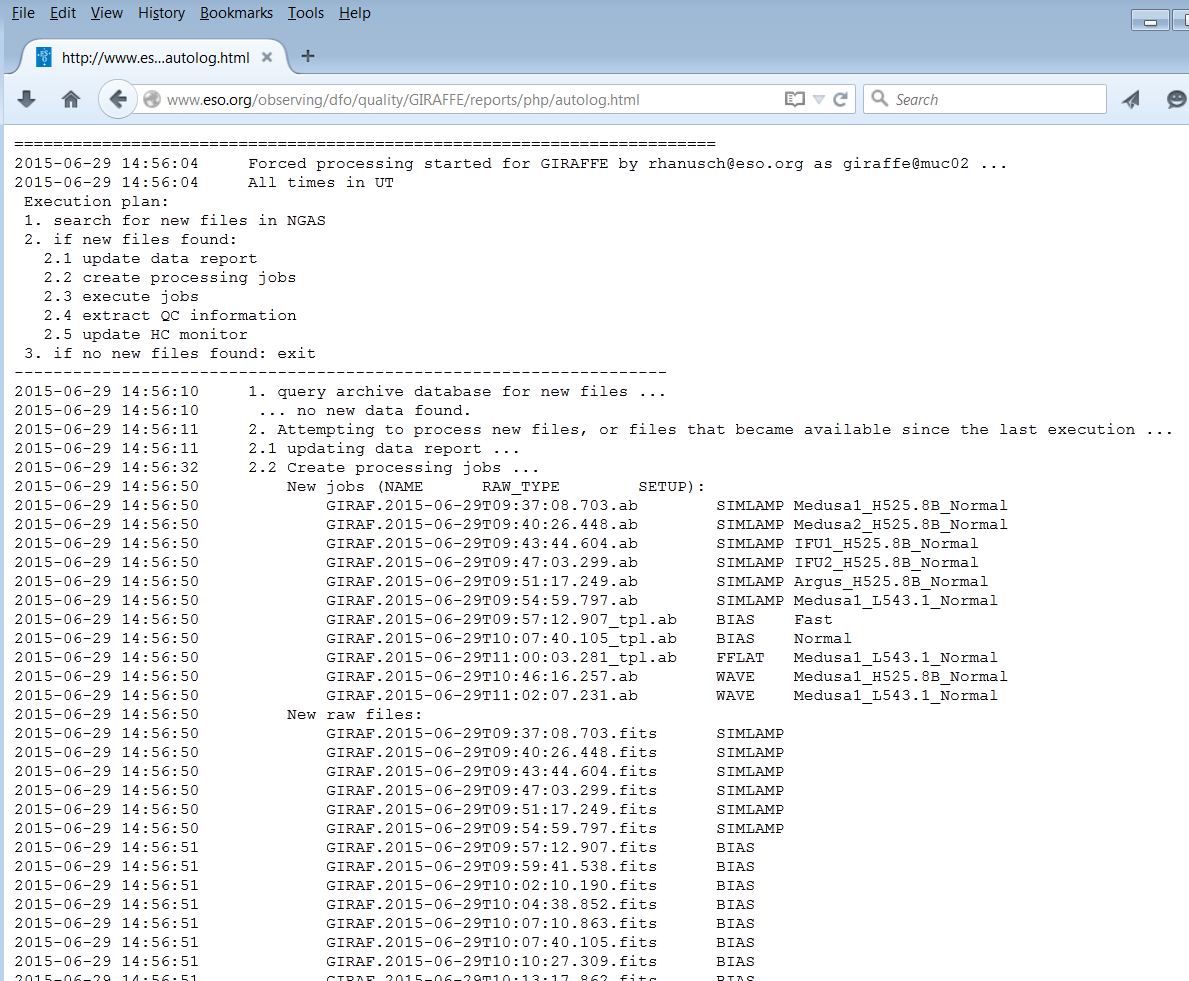

| When the process is started, it sends a mail to the email address just entered. Then it displays first the execution plan ... |

|

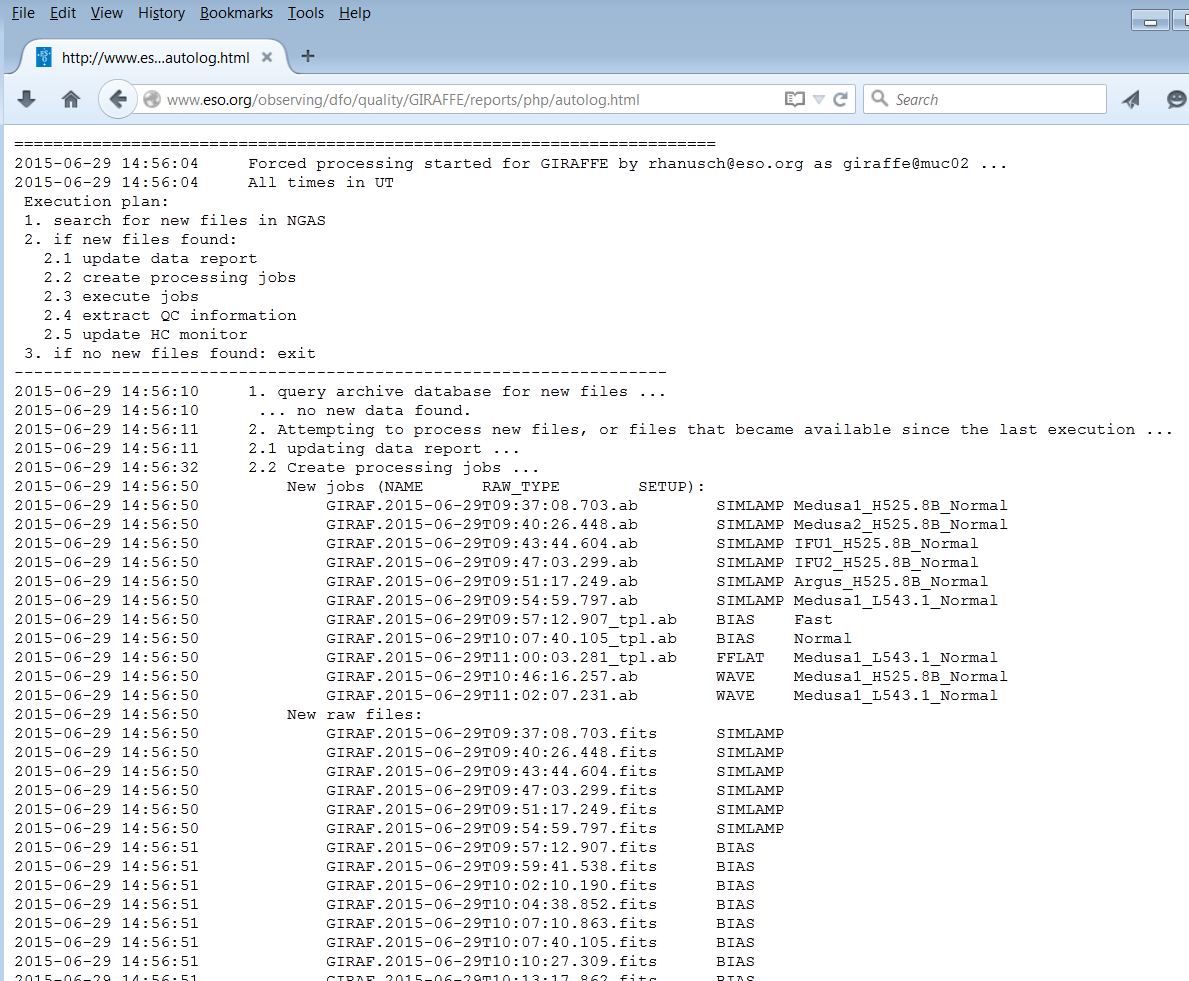

... and later the progress. Once finished, it sends again an email notification, with the complete processing log and some statistics (number of processed files, list of HC reports updated).

This file auto-refreshes every minute. |

|

Cases of conflict between forced and scheduled instances of autoDaily:

Case |

Behaviour |

| forced autoDaily, without scheduled autoDaily interfering |

nothing special, just like a command-line call |

| forced autoDaily runs into a scheduled autoDaily session |

forced autoDaily will be terminated (since its ultimate goal is already being achieved by the scheduled instance); email about termination sent to originator |

| scheduled autoDaily runs into forced autoDaily session |

scheduled instance goesin wait loop, just like for any other pre-existing instance. |

| forced autoDaily session runs into another forced session |

second session waits until $TIMEOUT is reached, then it terminates, email sent to originator. |

![[top] [ top ]](/images/arr1red-up.gif) Output. The tool returns, for all processed dates:

Output. The tool returns, for all processed dates:

- a set of executed CALIB ABs, AB logs, processing logs

- QC and scoring reports, as produced by processQC

- ingested QC1 parameters

- pipeline products ready for review (with dfos tool certifyProducts)

- updated trending plots

- execution time is written into the DFO database table exec_time.

The next steps in the daily workflow (certifyProducts and moveProducts for

CALIB) are manually launched from the dfoMonitor since they require interaction and decisions.

![[top] [ top ]](/images/arr1red-up.gif) Execution log. The execution

log is found under $DFO_MON_DIR/AUTO_DAILY. This log file mainly consists of the merged

log files of all participating dfos tools. Since its full execution may take hours, the

log file is kept temporary during runtime, as $TMP_DIR/autolog. After termination, it is

written into the final AD_<execution date>.log. During execution, the temporary log

file can be watched on the dfoMonitor (click the red button, or the 'log' link on the

right top part). In forced mode, the log file can be followed on the browser window.

Execution log. The execution

log is found under $DFO_MON_DIR/AUTO_DAILY. This log file mainly consists of the merged

log files of all participating dfos tools. Since its full execution may take hours, the

log file is kept temporary during runtime, as $TMP_DIR/autolog. After termination, it is

written into the final AD_<execution date>.log. During execution, the temporary log

file can be watched on the dfoMonitor (click the red button, or the 'log' link on the

right top part). In forced mode, the log file can be followed on the browser window.

The autoDaily logs are kept separate from the cronjob logs (under

CRON_LOGS) since otherwise it might become difficult to find them.

The tool creates another type of log

file, the AB creation log, as ABL_<date>.log, also under $DFO_MON_DIR/AUTO_DAILY.

It list each single AB created, with the creation timestamp. This is useful for the incremental

mode and helps checking

- when a particular AB was created,

- if an AB has been created multiple times.

Outdated log files (2 years or older, by year) are auto-deleted.

![[top] [ top ]](/images/arr1red-up.gif) How to use

How to use

Type autoDaily

-h for on-line help, and autoDaily

-v for the version number.

The standard way of calling the tool is as cronjob.

You can also call the tool from the command line, or via the php interface linked to the HC monitor.

Call 'autoDaily -D' if you want the full execution of a custom date list $DFO_MON_DIR/list_data_dates.

![[top] [ top ]](/images/arr1red-up.gif) Configuration file

Configuration file

The tool confguration file (config.autoDaily) defines:

| USER |

giraffe |

needs to be set for cronjob enabling |

| DISK_SPACE | 95% |

stop if disk space occupation exceeds $DISK_SPACE |

| OTHER_TIMEOUT |

50 |

timeout parameter for waiting for other dfos tools (in minutes); should

be 50 or less! |

| PGI_PREPROC |

pgi_fork |

optional, user-provided plugin script to manipulate the workflow in

a non-standard way |

| FLASHBACK |

NO |

YES|NO: if YES, tool will backwards-process dates older than 2 days if they were incomplete

and ngasMonitor reports a new file (default: NO; evaluated only if ENABLE_INCREM=YES) |

![[top] [ top ]](/images/arr1red-up.gif) Operational hints.

Operational hints.

-

Dates to execute (incremental mode for fast data transfer). For

all Paranal instruments, the data are delivered in near-real time. They

appear in the archive continuously. This is the standard pattern. The data list usually contains only

one date, the current one. autoDaily is called once

per hour. Any new file will be used for creating

and processing ABs. This approach ensures the optimal timescale for QC feedback

(one hour or less) which is the operational committment.

-

Suggested autoDaily cronjob pattern is once per hour after calChecker (say

10 minutes): e.g. " 24

* * * * " (24 minutes after every hour; arbitrary offset to avoid simultaneous

queries from all dfo machines). Minimum pattern is once per hour during

daytime calibrations, in order to have calChecker, CALIB

products results and HealthCheck plots up-to-date within one hour.

-

For the forced mode of autoDaily to work properly, you need an additional line in your cronjob file, with a cadence of one minute (!). See the dfosCron page for more.

- dfoMonitor supports autoDaily by

links to the current list_data_dates and the execution log, and by displaying flags

and progress information if autoDaily is executing.

- The header download is not

included in autoDaily since this is done by calChecker,

every half hour, and does not need to be repeated. Only in forced mode, headers are downloaded.

- Interference with other

similar dfos processes should be avoided. Especially createAB and processQC are

sensitive since they are not prepared for parallel execution, and they make take

some time. autoDaily checks for the following tools (per

$USER):

If any of these is found by 'ps -wfC <tool> | grep $USER | grep -v

CMD', autoDaily sleeps for 60 sec and then tries again. It will exit after $OTHER_TIMEOUT

minutes. You would

then need to investigate the origin of the problem (if any) and launch createAB etc.

manually, or use the jump option (-C) or the manual option (-D). The

plugin option PGI_PREPROC can be used to fine-tune autoDaily behaviour.

If the tool finds an instance of 'createAB

... -r' ("re-createAB"), it is killed since it is an interactive session and can be re-called anytime.

- With autoDaily being fired once per hour, the configuration

key OTHER_TIMEOUT should be no larger than 50 [minutes]. This is enforced by the

tool.

- The configuration key FLASHBACK can be used to

control the behaviour of autoDaily when data deliveries are delayed (by several

days). FLASHBACK=NO removes any date from the dates list which is older than 2 days.

This is useful if that date is still incomplete (since e.g. a single science file is

missing), but you have certified and moved all CALIB ABs already. Otherwise, with FLASHBACK=YES,

the tool will start reprocessing all CALIBs for that date once the missing file becomes

available. You can avoid this unwanted behaviour by setting FLASHBACK=NO (which is the

default anyway).

- Execution time of autoDaily is written into

the DFO database table exec_time. Find

the WISQ plots here. They can be useful to monitor as shiftleader.

![[top] [ top ]](/images/arr1red-up.gif)

|

Last update:

March 6, 2024

by bwolff

|